Google Analytics can sometimes show results that leave you scratching your head. Use this guide to check you can trust the data it is displaying, so that you can be confident about the business decisions you are making with it.

So you have a website, a GA code was dropped on it a while ago and now you’re finally thinking about getting serious with the data you collect. Perhaps you inherited some issues with the account from its previous caretaker. A poorly configured account could mean missing out on important data or working with misleading information. So if you don’t want your campaigns (SEO, email, link-building, PPC, display etc.) to be pointless and want your decisions to be truly data-driven, this guide will help you get started.

Set your date range to the last six months and let’s go.

1. Check yourself before you refer yourself

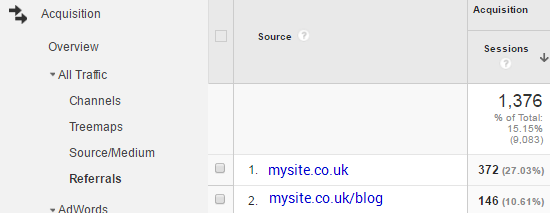

The first place to dive into is the referrals report, under All Traffic in the Acquisition section. Filter the sources by searching for your website.

If you’re seeing a large number of self-referrals, then you’re most likely missing tracking code somewhere or there’s a configuration problem. Leaving this unchecked will skew a lot of data in GA, from conversions to behaviour. It will compromise your data integrity and lead to bad business decisions.

To fix this, you’ll need to identify which pages are missing tracking code and then add the code! If this doesn’t fix the issue, then cross domain tracking might be set up incorrectly. In either case it needs fixing. And do not just add your domain to the referral exclusions list.

2. Tracking code

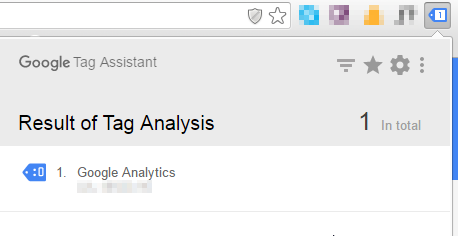

Check whether the tracking code being used on the website matches the tracking code in your account’s property settings. If they’re different then you could be losing valuable data.

You can check by viewing the source code of your website. But don’t draw any conclusions yet. Ctrl + F unfortunately won’t return much insight from the source code if the site’s tracking was implemented with Google Tag Manager. Luckily a Chrome extension called Tag Assistant (by Google) will be able to tell you. And most importantly tell you whether you’re using the Universal Analytics code or the older tracking code (Hint: you should be using the first one. Click here to see why).

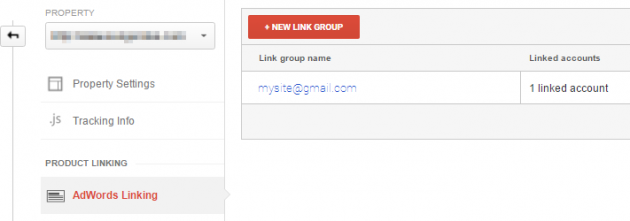

3. AdWords linking

If you’re doing any paid activity through AdWords, it’s important to check whether your AdWords account has been linked to your Analytics account correctly. There may be some small data discrepancies for a number of reasons but you need to ensure this is not the result of incorrect linking between the two accounts.

4. Channels

There are lots of things you can analyse when sifting through the Channels report – too many for this blog post. So here are two important ones.

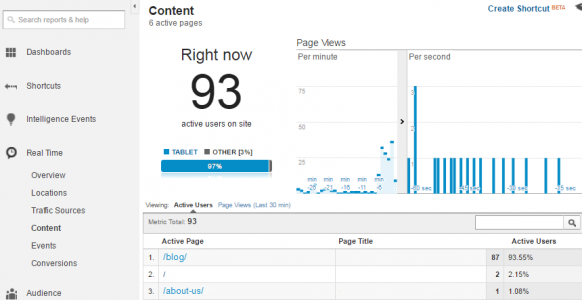

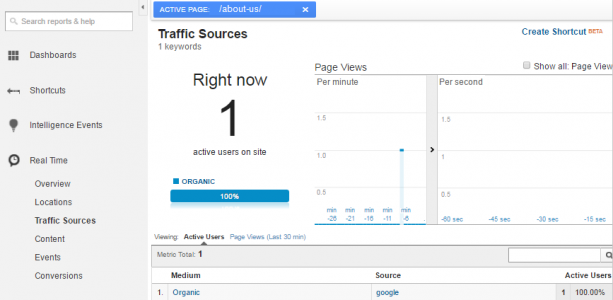

In some cases, organic traffic might come through to your account as direct traffic. To check, head to Content under Real Time. Now, Google your site and find an organic link. Right, are you watching the Real Time report? Two screens would be very handy right about now.

Click through to your site via your organic listing and then through to a relatively low-traffic page, e.g. “about-us”. When you see the page appear in GA, click it to filter all real time results by that page (but don’t close the web page!). Then click on Traffic Sources and check whether you’ve come through as direct or organic.

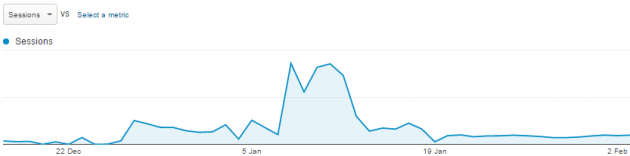

Second, be on the lookout for huge, sudden spikes in direct or referral traffic in your data. If it’s not the result of a misattribution of your marketing mix, then you need to check for spam. Typically, this kind of obvious spam traffic will originate from a single location, have a bounce rate of close to 100% and spend close to 0 seconds on the site. Add city as a secondary dimension to your report and you’ll likely see all the traffic originates from the same place.

5. Spam traffic

Now that you’ve realised your data is infested, how do you stop it from happening again?

This is really simple but often overlooked. Google Analytics provides a bot filtering option within the View Settings. Checking the box will exclude bots and spiders that could really mess up your data. Google’s list of spiders and bots is continuously updated. Some may slip through but not for long (not long enough to wildly affect your data).

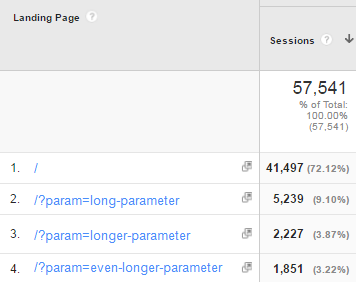

6. URL page parameters

Often when looking at pages on your site, you will see long strings attached to the end of your page URLs. This can make your reports hard to read and make it difficult to analyse the performance of particular pages.

Google’s search-and-replace filter will enable you to fine tune your data within your Google Analytics views and make it more human-readable. In the example above, we would use a regular expression to simplify our page paths by searching for ‘param’ and replacing it with an empty string. (Hint: regular expressions are a powerful way to filter your data in GA)

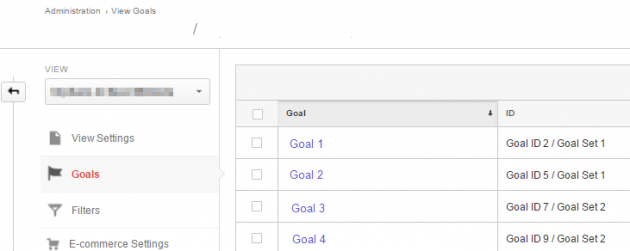

7. Goals

Goals enable you to measure how often users complete specific actions. It is important to check that these are set up correctly and that they meet your business KPIs. Additionally, previous users of the account may have set up all sorts of strange goal funnels to monitor drop off points in the customer journey. If they’re not pulling through any conversions from the last 7 days, something is wrong.

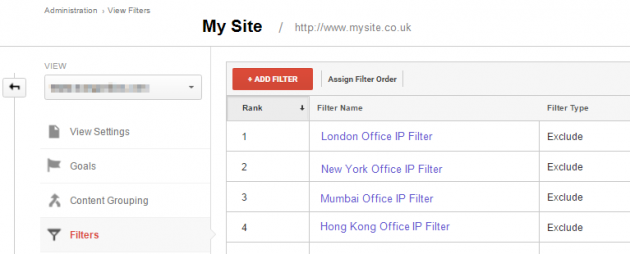

8. Exclude internal traffic

Presumably, you’ll want to track how external users are interacting with your website. If internal traffic from you or your office is piled into the mix, it can be difficult to understand true customer behaviours. To prevent this from happening, you’ll need to create an IP address filter for your view, excluding your IP address.

Think something else belongs in this list? leave a comment below and let us know.