How to Use Social Media Mining for Powerful Audience Insights

Social media mining (SMM) is the hallowed art of trawling through an abundance of openly accessible online conversations with the goal of building a deeper insight into your audience. And that’s exactly what it comes down to – an art. Mining is a use of data science techniques to unearth hidden patterns buried in text; expounding the findings into something useful is where mined ore might be chiselled into a masterpiece of insight.

Many introductory articles delve into sentiment analysis of tweets related to U.S. elections, but such scenarios are uncommon in typical client briefs. Moreover, social data offers far richer opportunities: X (formerly Twitter) boasts approximately 245 million daily active users , Reddit has around 97 million daily active users , and YouTube engages about 122 million users daily —all providing generous, publicly available APIs ripe for exploration. This doesn’t even account for the myriad specialised discussion forums accessible via web scraping tools.

The practice is typically divided into two waves: (1) data collection and (2) data analysis. Both are way more complex than they might appear at first impression. The collection requires a ton of careful forethought and trial-and-error. Analysis is arguably even tougher but there layeth the fruit of possibility.

This is a working guide for data scientists collaborating with teams who want audience insight, in-house or client-facing. The data scientist might be inclined to challenge themselves by amassing as much data as their systems allow but the client is looking for quality. Ultimately, it’s about striking a balance between a trustworthy sample size and juicy insights.

Social Media Mining Approach

Objectives

Solidify the project objective. This is imperative for not only the usefulness of the result but your own mental wellbeing. An unfocussed analysis is going to leave you taking wild swings in the dark with unpredictable outcomes that are unlikely to hit the mark. Be clear on the goal, be it a generalist fact finding mission or a much more specific request into how a target audience feels in reaction to a particular event. Some decent questions to base a SMM project around might be:

- What are the most common phrases used when talking about video streaming?

- Which combination of hashtags will drive the highest level of engagement for fashion-themed video content?

- Why do people get frustrated with and stop using my product?

- Which locations are people in the UK most looking forward to travelling to?

- How does our business’ brand awareness fare against our competitors?

Furthermore, why are you undertaking this work? Is it to inform a content strategy? To give insight into messaging? To help a business understand their positioning in the market? This will become much more important later on when translating the results into a workable piece but it’s important to establish that now. If the venture is to explore the language that resonates with your audience most strongly then your approach is likely to be very NLP technology based, whereas if you are seeking to quantify the impact of your client’s social share-of-voice then it becomes a lot more numeric and signal driven.

Targeting

The other thing to think about before diving in is your target audience. Typically, you will want to know what a specific audience is talking about, or you will want to know who is talking about a specific thing. In any case – where does your audience reside online? Only use a platform’s data if you are confident that a sizable chunk of your audience can be observed there.

X users have profiles, Subreddits have defined and often niche focus, YouTube has specific content topics. This means we can survey specific online communities and really drill into the people important to your business.

Pro Tip: A bonus feature of X are X lists. These are curated lists of (usually) active users belonging to a particular audience niche, gamers for example. There are nice tools out there like Scoutzen that allow you to search through relevant lists on X and build up your list of highly relevant subjects to mine.

Of course, while I advocate an audience-first approach sometimes a target audience could literally mean everybody and it is the topic rather than the audience that is important. The keyword-first approach can be messier since it’s difficult to anticipate what information you might get back from the APIs. Words can be very ambiguous especially in- and out-of-context.

Essentially, before collecting any data, think very carefully about the best way of acquiring those tweets, Reddit submissions, YouTube comments, forum threads etc. My personal preference is:

- Define the audience segment

- Identify profiles that match the criteria (lists, searching for keywords in user profiles etc.)

- Acquire the timelines/contributions of each profile

Gather Information

Now is the time to get your grubby little mitts on some meat to chew through. Pick your API/s or forum/s and write out your logic for acquiring the data you need – the profiles to acquire, the keywords to target etc. You will need to be mindful of timelines here and get this done early. APIs have rate limits and quotas so you want to make sure you at least get close on your first few tries. Start by gathering some small samples and get intimately familiar with the data you receive. Shortcuts here will come crashing down hard later on.

It’s difficult to predict what might happen as you next scale your information retrieval. It can be scary. You don’t want to set your programme to amass as much social data as your API subscriptions allow only to find that the resulting dataset falls short with no means for a redo.

Start small. Save as you go. Scale up carefully.

Setting the Scene for Analysis

Before rushing to apply the latest toys in NLP, it’s important to build up a picture of your audience so that we can know where our insights are coming from and be sure they express opinions of people relevant to the campaign. Quite often you’ll spot bots, competitions and spam tainting a large section of your data – now is the time to uncover that.

Suss out your Audience

I like to start by painting a picture of the people the data stems from. It is good to be clear about the lens you’re analysing data through – who is your audience?:

- How many distinct users did you find data for?

- How influential are they (engagements, followers etc.)?

- How active are they?

- Where are they based geographically?

- What common patterns stand out from their profile descriptions?

- Is there a focus towards a particular industry vertical?

Run an entity analysis across your profile descriptions to discover common and distinct things that crop up across large segments of your audience. Technologies such as spaCy offer free state-of-the-art entity detection and gather really useful insights into the composition of your sample audience. This can be applied to surface the types of careers users are leading, specific attributes they use to define themselves or certain location signals that tell you a little more about where people live.

Once you discover location-type entities, you can run them through a geocoding tool to estimate the precise coordinates of each user (a good free tool I can vouch for is Batch geocoder for journalists, APIs here can be expensive!). This is a really powerful technique for creating localised insight by understanding nuances between territories – particularly useful for international campaigns.

Word clouds do a decent job of visualising common language patterns within your profile set. Or as I like to refer to word clouds: the cheapest trick in the data scientist’s toolbox. Yuck. But it does a nice job sometimes.

Data Analysis

You know what time it is. You worked hard to get here this is where the fun starts. Crack open the toy chest and bust out the big guns.

Techniques & Frameworks for Analysis

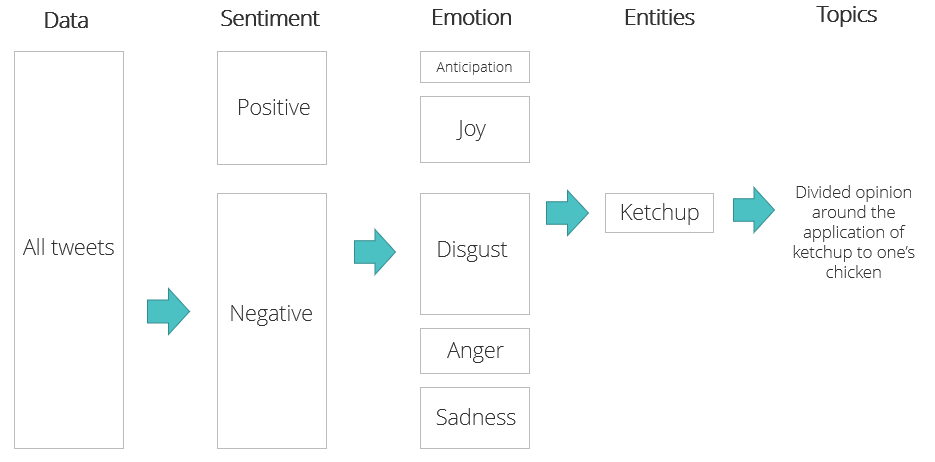

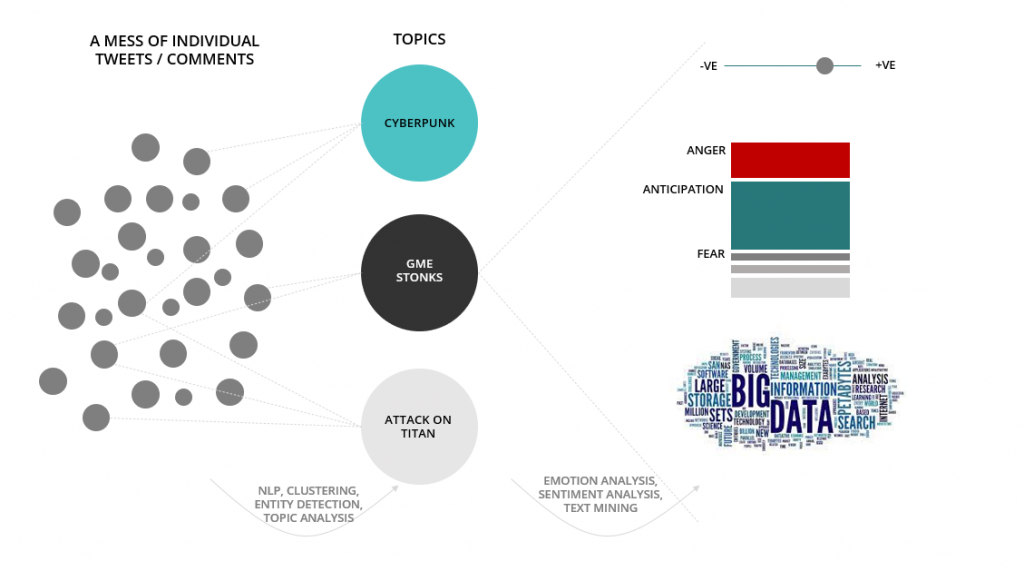

In a previous post I posited a framework for analysing tweets that can be broadly applied to any similar data. The following can be a very helpful framework for structuring an analysis of tweets or comments:

[A quick and simple base is to start by separating statuses between those expressing negativity and those expounding positivity. From here, we can use data science to split text documents into different categories of emotion: angry tweets expressing outrage, tweets expressing elation and joy etc. From emotions we can use topic and entity analyses to extract hot themes and topics of conversation. We applied the above example to Nando’s audience and found a segment of users expressing disgust centred around guests slathering their cheeky chicken in ketchup rather than their painstakingly crafted Peri Peri specialties. The analysis unearthed a highly divisive topic likely to generate strong engagement through content.]

You can think of it in terms of abstracting common themes from a horde of unstructured text data. You have a huge amount of untapped text choc full of potential insight and we need some way to aggregate and express that. Linguistic analysis techniques (topic analysis, entity detection, document clustering etc.) excel at extracting these patterns but can be incredibly challenging to interpret. This can be a big sticking point in the analysis workflow but I’m here to swaddle you in a blanket of reassurance. Just keep reading, you’re in safe hands.

What to do When You’re Stuck

You’ve landed in the analysis pit of despair. It can be a dark place having done all this work only to not know what it means or how to get something useful from it. It is very difficult to bridge the gap from data sciencey stuff to businessy stuff.

There is no analysis in existence that can do this part for you. We’re just not that advanced yet. Entity detection is messy and topic analysis can be vague and bizarre. It’s up to you to interpret your findings. These data science techniques guide us towards the real insight and often these insights are shrouded by the mess that naturally associates with social media.

Think of it this way. The results of these techniques aren’t the finished product – they are a guide pointing you in the right direction. Think a little more abstractly, study the findings – what patterns are you noticing as a human observer? What stands out to you and how does that relate to actual people and their interests?

Next, think about looping engagement data in. Do topics, phrases and entities with high levels of engagement, instead of how frequently they’re brought up, make more sense?

If you’re still stuck, take it back to basics. What hashtags are trending? Now what other phrases surround those hashtags? Which hashtags create the most engagement and what is common across them?

Just keep the end goal in mind and think about how the tools might help reveal some of that insight, rather than how you can interpret the insight from these tools.

The Insights Document

The final and most important step – actionable insight. How do your findings answer the brief you set out to research? What content recommendations can you make from your topic analysis? What implications do your findings have for the client’s strategy? What advice can you dispense about the best way to engage the target audience? Don’t leave the recipients to do all the work to make sense of it all.

A nice touch is to open up your raw data (posts etc.). It’s fascinating when you have unrestricted access to your target audience. It also gives a strong sense of the scale that went into the project and assures the client of the quality. Both help build confidence in the analysis.

And if you really want to go into detail with some of the analysis, think about how you visualise the data. Can your end user get direct value from your charts?

The gold standard is that anybody should be able to understand your insights. The end user should be able to take your insight and apply it right away – something they can use today.

Using SEO, PPC, paid social, and content powered by data and AI, Found builds digital strategies that turn social media insight into meaningful, search-driven results. Ready to make your data work harder? Let’s talk.